We’re all talking about using AI to be more efficient PMs—for summarizing user feedback, drafting PRDs, you name it. But the more profound shift isn’t in how we work, but in what we build.

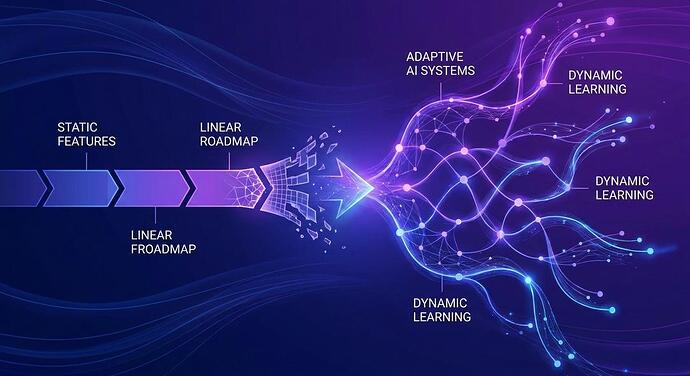

Traditionally, our roadmaps are lists of deterministic features. We build X, the user gets Y. It’s binary; it works or it doesn’t. But with AI-powered products, we’re not shipping static features anymore. We’re shipping systems that learn and evolve. The product’s behavior is probabilistic, not fixed.

This fundamentally challenges our concept of a roadmap. How do you roadmap a system that’s never truly ‘done’? If a core ‘feature’ is an ML model that continuously improves with data, our roadmap needs to reflect learning objectives or capability milestones rather than a checklist of features. Success metrics also shift—from feature adoption to model accuracy, user trust, and the quality of the system’s autonomous decisions.

This requires a new level of collaboration between PMs, data scientists, and engineers to define what ‘good’ looks like when the outcome isn’t predictable.

How are you adapting your roadmapping process and success metrics for products that learn and evolve, rather than just sit there?